Spin up a notebook with 4TB of RAM, add a GPU, connect to a distributed cluster of workers, and more. Saturn Cloud is your all-in-one solution for data science & ML development, deployment, and data pipelines in the cloud. By using Python and the Boto3 library, we can automate this workflow and make it easy to migrate or synchronize data between different Redshift environments. In this blog post, we’ve discussed the best approach to script the process of unloading and copying data between two Redshift clusters. Replace the placeholders with your actual values and run the script to unload and copy data between your Redshift clusters. Import boto3 import psycopg2 # Define your Redshift and S3 parameters here source_redshift_cluster_id = 'your-source-redshift-cluster-id' destination_redshift_cluster_id = 'your-destination-redshift-cluster-id' redshift_db = 'your-redshift-db' redshift_user = 'your-redshift-user' redshift_password = 'your-redshift-password' unload_query = "your-unload-query" copy_query = "your-copy-query" s3_bucket_name = 'your-bucket-name' s3_prefix = 'your-prefix/' # Unload data from the source Redshift cluster to Amazon S3 unload_data_to_s3 ( source_redshift_cluster_id, redshift_db, redshift_user, redshift_password, unload_query ) # Copy data from Amazon S3 to the destination Redshift cluster copy_data_from_s3 ( destination_redshift_cluster_id, redshift_db, redshift_user, redshift_password, copy_query ) # (Optional) Clean up the temporary S3 files delete_s3_files ( s3_bucket_name, s3_prefix ) Here’s a Python function to delete the temporary S3 files: To do this, you can use the delete_objects method of the Boto3 S3 client. commit () Step 3: (Optional) Clean Up the Temporary S3 FilesĪfter copying the data to the destination Redshift cluster, you may want to clean up the temporary S3 files created during the unload process. connect ( conn_string ) as conn : with conn. describe_clusters ( ClusterIdentifier = redshift_cluster_id ) cluster = response redshift_host = cluster redshift_port = cluster conn_string = f "dbname=''" with psycopg2. client ( 'redshift' ) response = redshift. Import boto3 def unload_data_to_s3 ( redshift_cluster_id, redshift_db, redshift_user, redshift_password, unload_query ): redshift = boto3. Please refer to this code as experimental only since we cannot currently guarantee its validity ⚠ This code is experimental content and was generated by AI. To do this, we’ll use the UNLOAD command, which exports the result of a query to one or more text or CSV files in an S3 bucket.

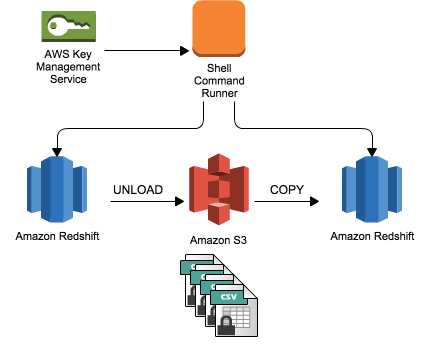

Step 1: Unload Data from the Source Redshift Cluster to Amazon S3įirst, we need to unload the data from the source Redshift cluster to an Amazon S3 bucket. We’ll use Python and the Boto3 library to interact with AWS services and automate these steps.

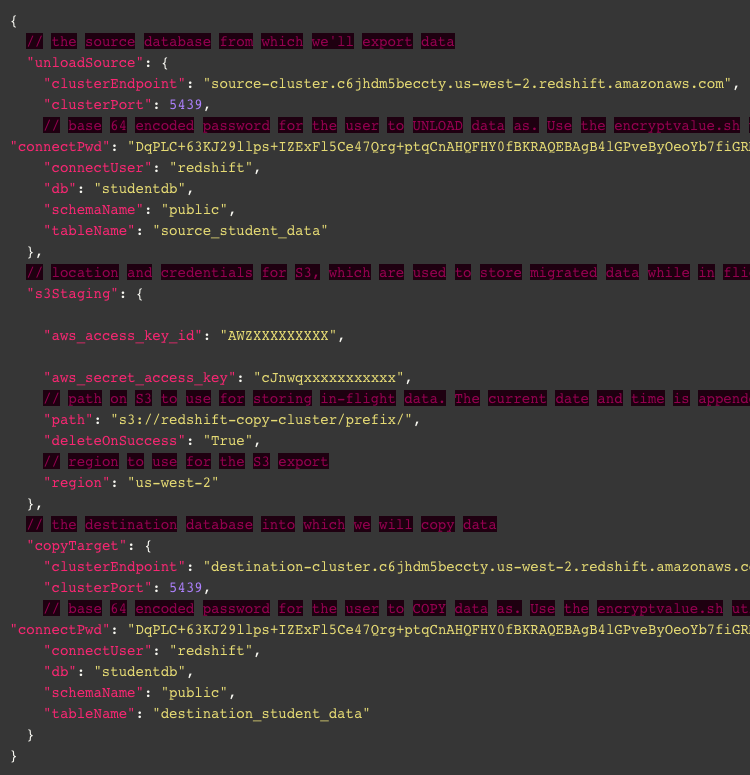

(Optional) Clean up the temporary S3 files.Copy data from Amazon S3 to the destination Redshift cluster.Unload data from the source Redshift cluster to Amazon S3.The process of unloading and copying data between two Redshift clusters can be broken down into the following steps: Boto3 library installed ( pip install boto3).AWS CLI installed and configured with the necessary permissions.Prerequisitesīefore we dive into the details, make sure you have the following: We’ll go through the steps involved in this process and provide a Python script that automates the entire workflow. This is a common scenario for data scientists and engineers who need to migrate or synchronize data between different Redshift environments. In this blog post, we’ll discuss the best approach to script the process of unloading data from one Amazon Redshift cluster and copying it to another. | Miscellaneous ⚠ content generated by AI for experimental purposes only The Best Approach to Script Data Unloading and Copying Between Two Redshift Clusters

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed